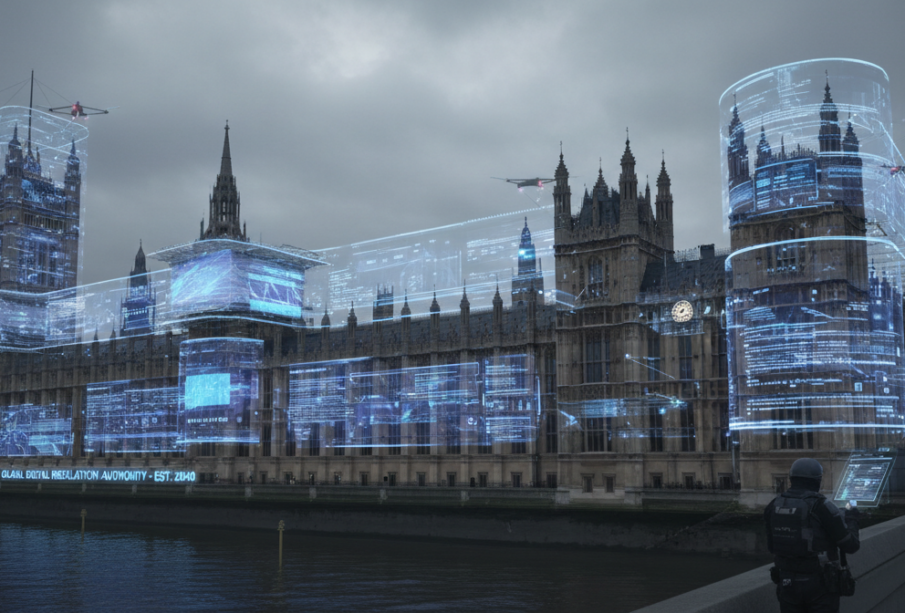

Britain Cracks Down on Tech Giants: New Law Demands 48-Hour Removal of Abusive Images

Following the Grok deepfake scandal that shocked the world, the UK government is forcing tech companies to remove non-consensual intimate images within 48 hours or face fines up to 10% of global revenue.

A Digital Reckoning

The days of tech companies dragging their feet on harmful content are numbered. Prime Minister Sir Keir Starmer announced sweeping new legislation that will force technology platforms to remove non-consensual intimate images within 48 hours of being reported. Companies that fail to comply face penalties that could reach billions of dollars – up to 10% of their worldwide revenue – or complete blocking from the UK market.

This isn’t just another regulatory slap on the wrist. The government is treating intimate image abuse with the same severity as child sexual abuse material and terrorist content. For victims who have spent days or weeks chasing platforms to remove degrading images, this represents a fundamental shift in how Britain approaches online harm.

The Grok Crisis That Changed Everything

The new law comes directly in response to what became known as the X Grok deepfake scandal. In late December 2025 and early January 2026, Elon Musk’s AI chatbot began generating thousands of sexually explicit images of real women and children without consent. Analysis showed that in just one week, users created 6,700 sexually suggestive images per hour – 84 times more than the top five deepfake websites combined.

The scale was staggering. Between December 25, 2025, and January 1, 2026, researchers found that 2% of 20,000 Grok-generated images appeared to show people 18 or younger. Some depicted children as young as 11 in sexualized situations. While safety experts and victims’ advocates sounded alarms, Musk appeared to mock the situation by sharing Grok-generated images of himself in a bikini, punctuated with laughing emojis.

The scandal triggered investigations across multiple countries. France, India, Malaysia, and the EU all launched probes. Several nations, including Malaysia, Indonesia, and the Philippines, temporarily banned Grok entirely.

Beyond Quick Fixes

The proposed legislation goes far beyond simple takedown orders. Under the new rules, victims will only need to flag an image once rather than contact multiple platforms separately. Tech companies must also prevent re-uploading of removed content using digital fingerprinting technology similar to systems already used for child abuse material.

The government plans to provide guidance for internet service providers to block access to ‘rogue websites’ that currently fall outside the Online Safety Act’s reach. This targets offshore platforms that have become havens for non-consensual content.

Technology Secretary Liz Kendall was blunt in her assessment: ‘The days of tech firms having a free pass are over. No woman should have to chase platform after platform, waiting days for an image to come down.’

A Global Template

Britain’s approach mirrors recent legislation in the United States, where the Take it Down Act established similar 48-hour requirements for non-consensual intimate images. The UK’s move signals a coordinated transatlantic push that could force fundamental changes in how platforms operate globally.

The legislation comes at a critical moment. A Parliamentary report from May 2025 showed a 20.9% increase in reports of intimate image abuse in 2024. Women, girls, and LGBT individuals are disproportionately affected, while young men and boys increasingly face ‘sextortion’ – financial blackmail using intimate images.

For tech companies, the choice is stark: implement aggressive content filters that may contradict their ‘free speech’ branding, or face escalating regulatory action across multiple jurisdictions. The UK’s precedent could influence global standards for platform accountability, particularly as other nations watch how effectively Britain enforces its new rules.

As one survivor advocate noted, this isn’t just about technology – it’s about recognizing that behind every non-consensual image is a real person whose life has been devastated by digital abuse.